Levels of Confidence in Statistics

Recently we calculated the probabilities of several mating scenarios producing a homozygous recessive, assuming the test animal (invariably a male) is a carrier. As most recessive alleles are not wanted, the sooner a carrier can be identified, the sooner it can be culled from a breeding programme.

But knowing the odds aren’t quite enough — odds are an indicator and not a guarantee of outcome. By sheer luck a carrier may produce a homozygous recessive after just one mating. Or it could take three, or ten, or more, matings — it’s all down to the random assortment of alleles and which sperm fertilises which egg.

As well as knowing the odds, breeders also need to know how many matings are required to be confident that a tested animal isn’t a carrier. We need to know the level of confidence.

The level of confidence (or confidence level) is a mathematical concept in statistics. A 0% confidence level is one where you have no confidence whatsoever that you’ll get the same outcome should you repeat the procedure. A 100% confidence level is one where you have no doubt at all that repeating a procedure will produce the same outcome. This level of confidence is only possible if you are able to use literally every single individual in existence at time of sampling — it is the only way to ensure you catch every ‘outlier’ that may alter results should you repeat the sampling. Thus this level isn’t truly possible in statistics and is regarded as a theoretical concept.

Statistical analyses commonly use a 95% confidence level. This isn’t so much a measure of accuracy as confidence in repeatability. If we take a random sample of a population, and repeat the same procedure over and over (with a different random sample each time), we can expect to match results theoretically taken from the entire population 95% of the time.

You may be familiar with a “bell curve", or normal (Gaussian) distribution curve, where a population is distributed evenly about some average value (the mean). A Gaussian distribution has the mean at the 50th percentile of 100 percentiles (percentage points), with half the population above this and half below:

© Optimate Group Pty Ltd

This distribution is assumed to apply in certain circumstances (like ours), thus any samples taken randomly from a population are expected to fall evenly around the (unknown) mean. To be 95% confident is to expect 95% of all samples to fall in a range that captures that unknown mean.

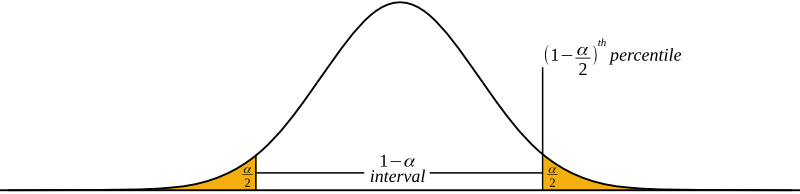

The confidence level is shown graphically and mathematically below. The 95% confidence level would be at the 95th percentile. As the sample is assumed to be normally distributed, this means if the range at one end is at the 95th percentile, then the other end must begin at the 5th:

© Optimate Group Pty Ltd

The confidence level when expressed as a proportion rather than a percentage is called the confidence coefficient. A confidence level of 95% has a confidence coefficient of 0.95 for example.

1 - α (the Greek letter alpha) in the graph above represents the confidence interval. This is the range of results.

The remaining, shaded parts combined equal α. As this is a normal distribution, the two are of equal amounts and each must equal α/2 .

A confidence level of 100% has a coefficient of 1, therefore (1 - α/2) is the percentile at which our confidence interval ends.

Now you have a better grasp of confidence levels it will be easier to follow the next few weeks as we go over calculations of the number of required matings for various confidence levels — see you then!

Leave a comment